Are We On The Verge Of Internet 2.0?

If you've been around the Internet and tech industries for a while, it can start to feel like there's often more hype than reality to a lot of buzzword-driven trends and so-called innovations. The advent of Web 2.0 in the early noughties had substance and ushered in a new era of more collaborative, social websites and applications. For all that has been written about Web 3.0, and despite all the heartfelt desire for another similar paradigm shift in online interaction, it's hard to see any really widespread impact to the way people build and use web technologies.

As for the Internet infrastructure underlying the web, there have, of course, been changes and upgrades along the way (see IPv6, TLS1.3, QUIC) but none of these have resulted in visible improvements for the end-user that created a 'wow-factor' to drive adoption. Indeed, the lack of any market pull for these developments is a big part of why deployment often remains very patchy. But could that be about to change?

The Internet Is Slow

If like me, you've been online since well before the turn of the millennium, then you'll know that waiting for things to happen used to be an intrinsic part of using the Internet. Waiting for dial-up modems to connect, waiting for email messages to be sent and delivered, waiting, sometimes days, for downloads of all kinds to complete. While it is obviously true that there's a big difference between always-on networks that support video streaming and intermittently-connected networks that barely support text-based interactions, many of the frustrations of those early days are still with us. Despite the widespread availability of multi-megabit – and even gigabit – broadband Internet connections, the cries of, "the Internet's slow today" haven't disappeared from the list of modern ills that can befall us. But why is this?

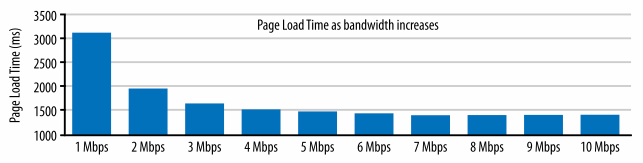

While Internet service providers continue to boast about the speed of their networks and offer different speed tiers that imply more speed equals better Internet, it's long been understood that additional bandwidth offers diminishing returns in terms of improved performance. The chart below, from a study by Mike Belshe that ultimately led to the development of QUIC, illustrates this point clearly. While there are large gains to be made in web page load time as bandwidth increases above 1 Mbps, these gains are marginal when bandwidth is increased above 3 Mbps. So beyond a certain point, more bandwidth isn't going to help improve the user experience of Internet performance.

The Network Is The Computer

The advent of cloud computing and the rise of progressive web applications mean the old Sun Microsystems slogan, 'the network is the computer', is now more true than ever. But because our (web-based) applications now depend on constant client-server interactions to function smoothly, the amount of time those interactions take (network delay, or latency) has become the focus for researchers trying to bring about a step-change in Internet performance.

“My theory here is when an interface is faster, you feel good, and ultimately what that comes down to is that you feel in control. The [application] isn’t controlling me, I’m controlling it. Ultimately that feeling of control translates to happiness in everyone. In order to increase the happiness in the world, we all have to keep working on this.”

I love this quote from Matt Mullenweg as it captures why the mission to minimize network latency isn't just about incremental improvement but can actually be transformative in terms of people's experience of the Internet and the kind of applications that can be widely supported. So if more bandwidth isn't going to help, what can we do to reduce network delays to their theoretical minimum?

Low Latency, Low Loss, Scalable Throughput (L4S)

At a recent meeting of the Internet Engineering Task Force (IETF), participants in the first interoperability test of a new experimental technology called L4S demonstrated some really impressive results. For all the details, see Greg White's presentation to the Transport Area Working Group. In a nutshell, they showed that L4S can deliver a delay variation of less than 10 milliseconds (ms) for 99.9% of traffic compared with a delay variation of over 100 ms for untreated traffic. The goal for L4S is to reduce delays due to queues in the network to, 'less than 1 ms on average and less than about 2 ms at the 99th percentile'. The work at the IETF interoperability hackathon and the results presented suggest the researchers and developers working on this technology are very close to meeting those objectives.

Now, shaving 90 ms to 100 ms off (worst-case) packet delays might not sound like much, until you remember the earlier point about how most websites and popular Internet applications tend to work today. Lots and lots of round trips between client and server mean the chance of an occasional spike in queuing delay marring your experience is quite high. Reducing delay variation for all traffic to close to the theoretical minimum would be a huge step up in performance. We'd go from everybody having those frustrating 'Internet is slow' experiences (regardless of their 'speed') to those experiences being rare to non-existent altogether. Pull that off and I think we really could start talking about Internet 2.0.

Is There A Catch? There's A Catch.

Sounds great right? Of course, there's a catch. There's always a catch. There are typically at least three participants in any Internet-mediated communication: the sender, the receiver, and the network in-between. L4S requires changes to all three to deliver all of its benefits. Needing so many players with disparate interests and incentives to take action and potentially even to coordinate is a tall order. In fact, it's exactly the kind of incentive problem often cited when explaining why other potentially beneficial new technologies haven't seen widespread adoption on the Internet.

In the case of L4S, there is a reason to be optimistic though and that reason is the 'wow-factor' I mentioned earlier. The hope for folks working to develop and demonstrate L4S is that it will actually be possible to develop and demonstrate compelling product offerings depending on L4S-based levels of performance that will help drive adoption across the industry. The array of companies participating in the interoperability hackathon at IETF certainly suggests widespread interest from device manufacturers, network operators, and Internet application service providers might already have gelled.

We could be on the verge of a real step-change in Internet application responsiveness to a new paradigm where consistently low delay for all traffic gives end-users unprecedented levels of confidence and satisfaction (Matt Mullenweg's 'happiness') every time they use the network. We plan to continue tracking and reporting on L4S developments, so do follow @isoc_pulse to make sure you hear how the story unfolds without delay.

More Information About L4S

- L4S Architecture document (work-in-progress)

- IETF L4S Interop presentation

- Apple's WWDC talk on 'Reduce networking delays for a more responsive app'

- Ericsson & Deutsche Telekom demo L4S in 5G

- NVIDIA explain the potential impact on Internet gaming

Photo by Mathew Schwartz on Unsplash